Pyraug Case Study 1

Use of Pyraug software to perform MRI classification

Case Study 1: Classification on 3D MRI (ADNI & AIBL)

Introduction

A Riemannian Hamiltonian VAE model

Classification set up

Data Splitting

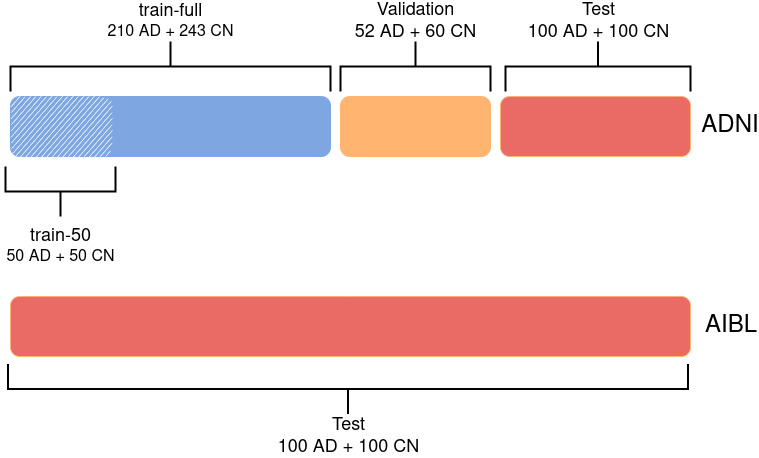

The ADNI data set was split into 3 sets: train, validation and test. First, the test set was created using 100 randomly chosen participants for each diagnostic label (i.e. 100 CN, 100 AD). The rest of the data set was split such that 80% is allocated from training and 20% for validation. The authors ensured that age, sex and site distributions between the three sets were not significantly different. The train set is referred to as train-full in the following. In addition, a smaller training set (denoted as train-50) was extracted from train-full. This set comprised only 50 images per diagnostic label, instead of 243 CN and 210 AD for train-full. It was ensured that age and sex distributions between train-50 and train-full were not significantly different. This was not done for the site distribution as there are more than 50 sites in the ADNI data set (so they could not all be represented in this smaller training set). The AIBL data was never used for training or hyperparameter tuning and was only used as an independent test set.

Data Processing

All the data was processed as follows:

Classifier

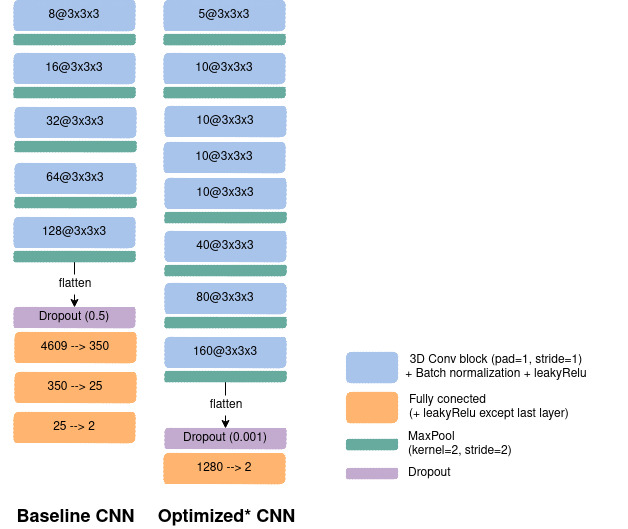

To perform such classification task a CNN was used with two different paradigms to choose the architecture. First, the authors reused the same architecture as in

Augmentation Set up

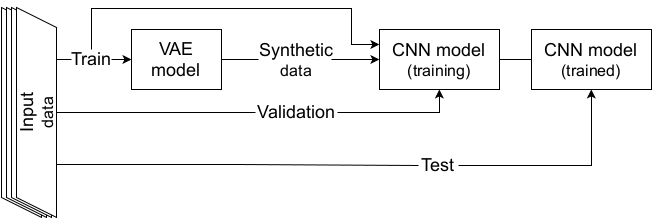

On the meantime, a RHVAE was trained on each class of the train sets (train-50 or train-full) to be able to generate new synthetic data. Noteworthy is the fact that the VAE and the CNN shared the same training set and no augmentation was performed on the validation set or the test set.

Then the baseline (resp. optimized) CNN networks were then trained for 100 (resp. 50) epochs using the cross entropy loss for training and validation losses. Balanced accuracy was also computed at the end of each epoch. The models were trained on either 1) only the real images; 2) only the synthetic samples created by the RHVAE or 3) the augmented training set (real + synthetic) on 20 independent runs for each experiment. The final model was chosen as the one that obtained the highest validation balanced accuracy during training.

Results

Below are presented some of the main results obtained in this case study. We refer the reader to

Pyraug software allowed for a significant gain in the model classification results even when the CNN was optimized on the real data only (random search not performed for augmented data set) and even though small size data sets were considered along with very challenging high dimensional data.